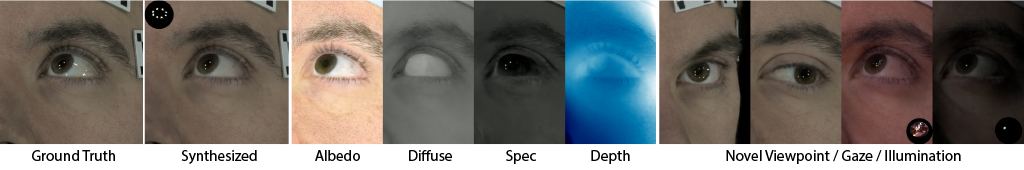

A unique challenge in creating high-quality animatable and relightable 3D avatars of real people is modeling human eyes, particularly in conjunction with the surrounding periocular face region. The challenge of synthesizing eyes is multifold as it requires 1) appropriate representations for the various components of the eye and the periocular region for coherent viewpoint synthesis, capable of representing diffuse, refractive and highly reflective surfaces, 2) disentangling skin and eye appearance from environmental illumination such that it may be rendered under novel lighting conditions, and 3) capturing eyeball motion and the deformation of the surrounding skin to enable re-gazing.

These challenges have traditionally necessitated the use of expensive and cumbersome capture setups to obtain high-quality results, and even then, modeling of the full eye region holistically has remained elusive. We present a novel geometry and appearance representation that enables high-fidelity capture and photorealistic animation, view synthesis and relighting of the eye region using only a sparse set of lights and cameras. Our hybrid representation combines an explicit parametric surface model for the eyeball surface with implicit deformable volumetric representations for the periocular region and the interior of the eye. This novel hybrid model has been designed specifically to address the various parts of that exceptionally challenging facial area - the explicit eyeball surface allows modeling refraction and high frequency specular reflection at the cornea, whereas the implicit representation is well suited to model lower frequency skin reflection via spherical harmonics and can represent non-surface structures such as hair (i.e. eyebrows) or highly diffuse volumetric bodies (i.e. sclera), both of which are a challenge for explicit surface models. Tightly integrating the two representations in a joint framework allows controlled photoreal image synthesis and joint optimization of both the geometry parameters of the eyeball and the implicit neural network in continuous 3D space. We show that for high-resolution close-ups of the human eye, our model can synthesize high-fidelity animated gaze from novel views under unseen illumination conditions, allowing to generate visually rich eye imagery.

@article{10.1145/3528223.3530130,

author = {Li, Gengyan and Meka, Abhimitra and Mueller, Franziska and Buehler, Marcel C. and Hilliges, Otmar and Beeler, Thabo},

title = {EyeNeRF: A Hybrid Representation for Photorealistic Synthesis, Animation and Relighting of Human Eyes},

year = {2022},

issue_date = {July 2022},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

volume = {41},

number = {4},

issn = {0730-0301},

url = {https://doi.org/10.1145/3528223.3530130},

doi = {10.1145/3528223.3530130},

journal = {ACM Trans. Graph.},

month = {jul},

articleno = {166},

numpages = {16},

keywords = {eye modeling, specularity synthesis, relighting, refraction, pose optimization, HDR rendering, model fitting, volumetric rendering, geometry deformation modeling, differentiable rendering, novel view synthesis, neural rendering, NeRF, regazing}

}